Fashion Tech for a Better Tomorrow, Today

Introducing the world’s first AI-powered Materials Management Platform accelerating the transition to a sustainable, net-zero Textiles economy

Introducing a better way to work

Materials Mangement Platform

Transforming the Way Fashion Brands and Vendors Collaborate for the Better

Better, Faster Sourcing

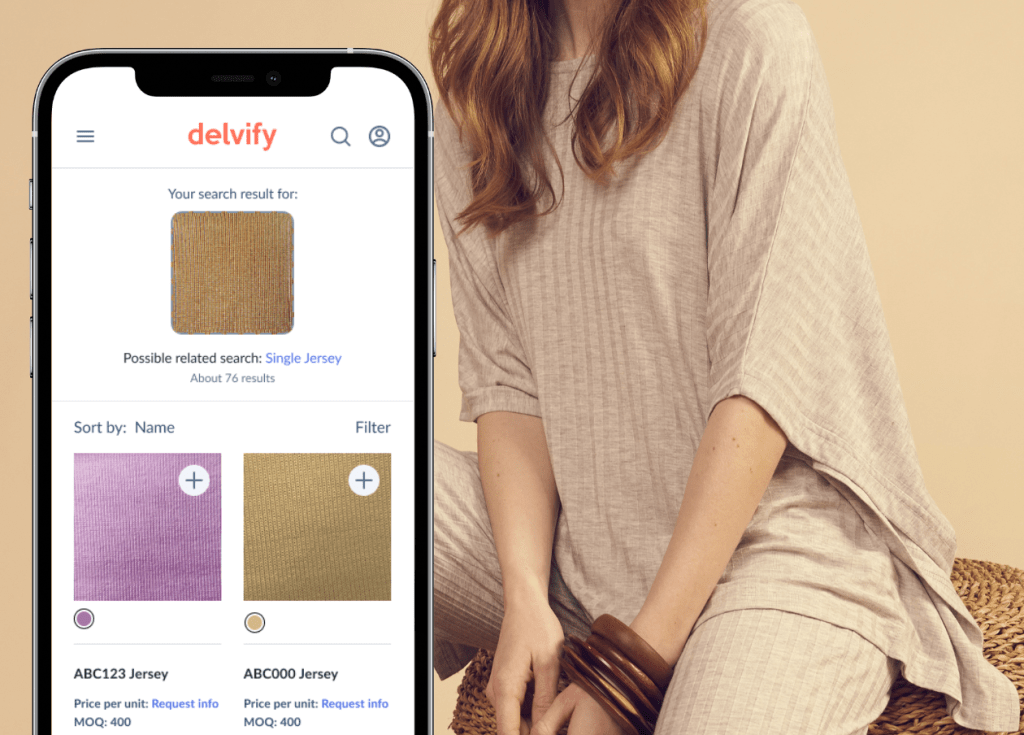

AI Powered Search

Find the right materials each time with our AI-powered search that includes Visual Search and NLP technology.

- Visual Search: Upload photos of sample materials to find your ideal match

- Text Search: Search using qualitative and quantitative search

- Identify materials at a composition level

Better Choices, Better Costs

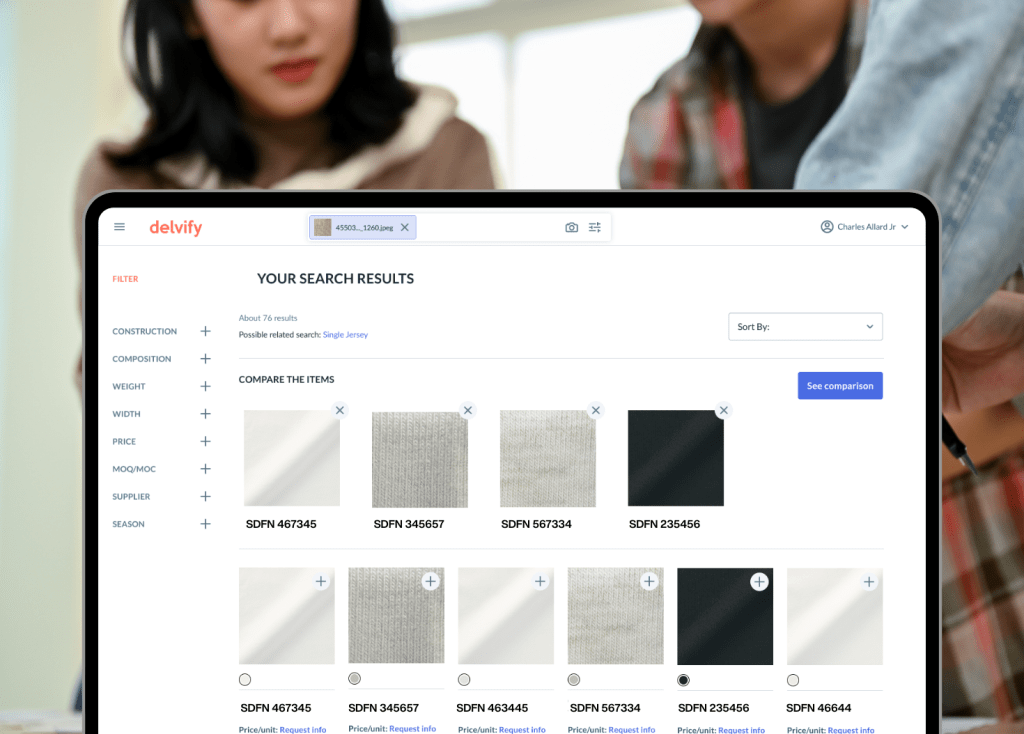

Discover Materials at Your Fingertips

Identify the right materials at any chosen level and compare them with our Smart Filter to accurately determine the best choices for your design faster.

- Database of all your materials available 24/7

- Refine your results with our built-in filters

- Discover similar materials with our recommendation models

- Shortlist and compare materials and pricing options

Better Collaboration and Communication

Facilitate Teamwork with Ease

Create and share material collections with your team for effortless collaboration across the supply chain.

- Organize and manage Collections

- Share customizable projects with team members and suppliers for increased visibility

- Reduce miscommunication, increase transparency

WHY DELVIFY?

Transforming the way fashion brands and vendors collaborate for material sourcing

join the success

Don’t just take our word for it

It also improved our "right first time" sourcing requests on materials by 62%. We now have more space on the timeline for testing approval, lap dip and fit."

better for the planet

Achieve Your Sustainability Goals

Reduce textile waste and your carbon footprint through accurate fabric selection. Provide transparency within your supply chain to stride towards your company sustainability goals.

Let’s Create Something Better Together, Today

Schedule a free demo with our team and let’s make things happen!